Why over-tightening happens

Over-tightening usually starts with good intentions. You cleaned up a noisy workflow, the output got clearer, and that felt good, so you kept going.

The trouble is that each improvement can make another tightening step feel tempting. You want fewer weak candidates, cleaner review sessions, and stronger surfaced names. All sensible goals. But if you keep narrowing the workflow without checking whether useful discovery is still happening, you can end up blocking too much value along with the noise.

What over-tightening usually looks like

A workflow that has been tightened too far often becomes quiet across multiple review cycles without becoming clearly smarter.

- Almost nothing surfaces across repeated review windows.

- The few surfaced candidates are not obviously better than before.

- You have too little output to compare patterns calmly.

- Review notes become thin because there is barely anything to assess.

- The workflow feels safe, but not especially informative.

The difference between cleaner and too quiet

A cleaner workflow reduces vague, low-value output while keeping enough surfaced candidates to compare quality and recognise stronger names more confidently.

An over-tightened workflow does the opposite. It cuts candidate flow so much that comparison becomes harder, review feels sparse rather than clearer, and absence starts to look like quality. That is the distinction. You are not trying to remove all friction from review, you are trying to keep enough good raw material that review remains useful.

Why low output is not always a win

Some traders judge a workflow too quickly by volume alone. If fewer names appear, they assume the workflow improved. Sometimes that is true.

But lower output only helps if the remaining output is more useful and still arrives often enough to review fairly. If the workflow becomes so selective that you cannot learn much from it, the setup may now be too constrained. That matters because TEST Mode is meant to create a repeatable learning loop, not just less activity.

Questions to ask when output feels too sparse

If you suspect over-tightening, ask whether you are still getting enough candidates to compare patterns, whether quality really improved or volume simply collapsed, whether review became clearer or just thinner, and whether you can still explain what useful output looks like in this workflow.

If those answers are getting weaker, the workflow may now be too narrow to teach you much.

If a workflow has gone too quiet

Loosen one part of the workflow at a time, then review again. The aim is not to bring back noise. It is to restore enough candidate flow that the learning loop becomes useful again.

A practical way to test whether you have gone too far

You do not need to guess. Leave the workflow unchanged for a fair sample, track whether enough candidates still surface to compare quality, note whether the few surfaced names are clearly stronger rather than simply fewer, and compare whether review feels clearer or merely emptier.

If learning has dropped, loosen one part of the workflow slightly and review again. That keeps you from reacting emotionally to a quiet stretch and helps separate good selectivity from over-correction.

What to loosen first

If the workflow does look over-tightened, do not undo everything. Use the same discipline you used when tightening. Make one small change, then review again.

The aim is not to make the workflow noisy again. It is to restore enough useful candidate flow that review becomes meaningful. You want the balance point where the workflow stays selective enough to feel clear, but broad enough to keep discovery alive.

Why this matters before LIVE

An over-tightened workflow can create a false sense of security. It may look disciplined because it stays quiet, but quietness alone does not mean it is helping you make better decisions.

Before LIVE, you want a workflow that surfaces candidates worth attention, makes review clearer, and teaches you something repeatable about process quality. If the workflow no longer gives you enough useful output to do that, tightening has probably gone too far. That is not a disaster, it just means the workflow needs a little breathing room again.

Want to test the workflow for yourself?

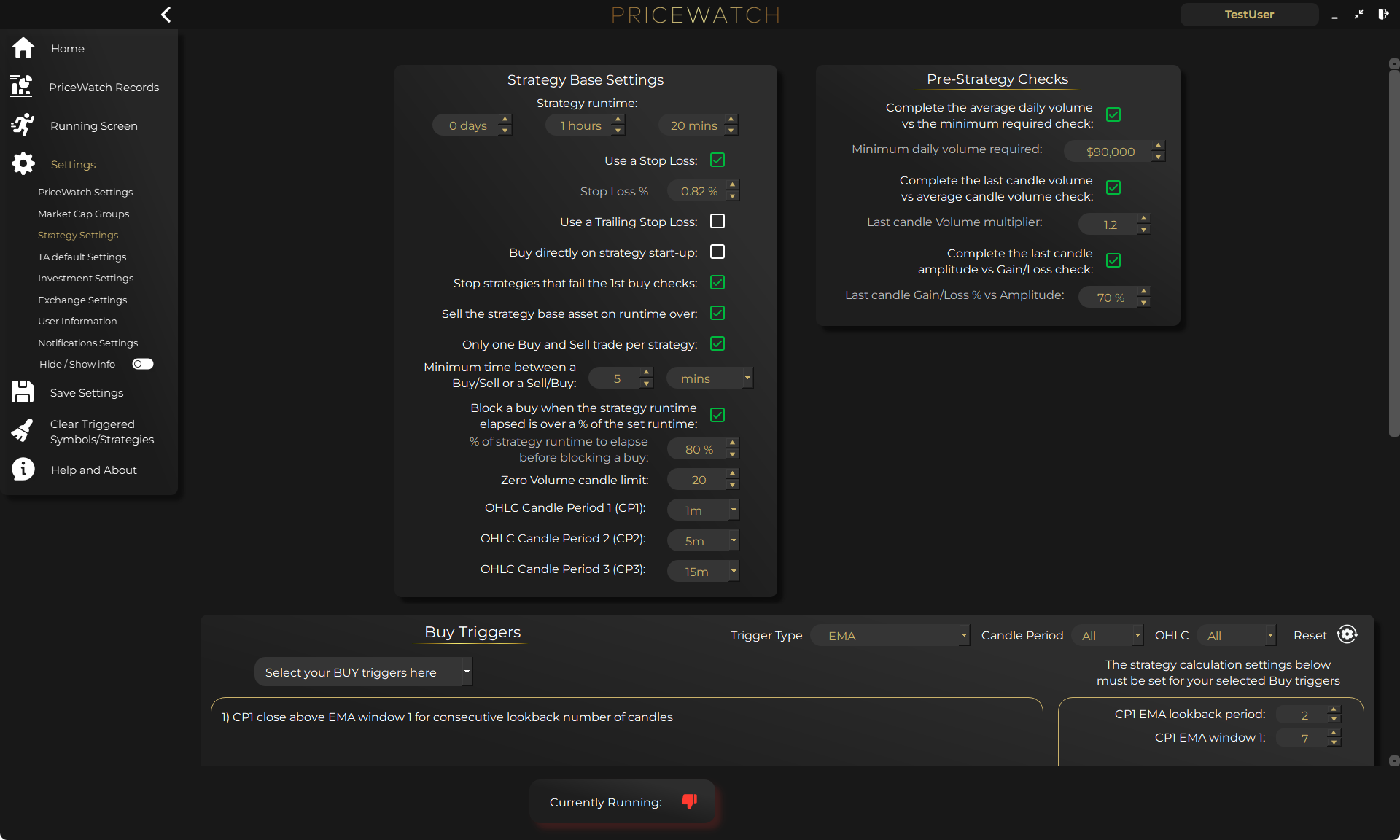

Start with PriceWatch in TEST mode

Start with PriceWatch in TEST mode and see how broader market discovery fits your process.

Not ready yet?

Get the next post by email

Join the newsletter for practical trading workflow ideas, product updates, and new blog posts.

Who this is for (and who it is not for)

Good fit if

- You have been refining a TEST Mode workflow and the output now feels unusually sparse.

- You want a calmer review process without killing off useful discovery.

- You are trying to decide whether fewer surfaced candidates really means better quality.

Not a fit if

- You want a workflow to stay noisy so there is always something happening.

- You want to jump to LIVE before the review loop is clearly useful.

- You are looking for software to replace judgement rather than support it.

FAQ

Is a very quiet workflow automatically better?

No. A quiet workflow only helps if the remaining surfaced candidates are clearly more useful and still arrive often enough to support fair review.

How do I know if I need to loosen the workflow again?

Usually when candidate flow has become so thin that review notes stop being informative, comparison gets harder, and the workflow teaches you less rather than more.

Should I reverse several tightening changes at once?

No. Loosen one part of the workflow at a time so you can tell what actually improved the learning loop.

What is the real goal?

A workflow that is selective enough to reduce noise, but active enough to keep discovery and review meaningful.