Quick answer

A strong candidate earns the next step. It stands out for understandable reasons, fits the job your workflow was meant to do, and gives you a clear reason to look closer. A weak candidate usually creates activity without enough quality, context, or review value to justify more attention.

The goal is not to predict winners from one glance. It is to separate candidates that deserve proper review from candidates that mostly waste your time.

A strong candidate is not evidence of a good trade. It is only evidence that this output deserves structured review under your rules.

Strong versus weak is not the same as winner versus loser

A strong candidate is not guaranteed to become a successful trade, and a weak candidate is not automatically meaningless in every possible workflow.

The real question is narrower: did this surfaced result make sense for the job your workflow was built to do? That keeps the review standard realistic and useful.

What a strong candidate usually looks like

A strong candidate usually fits the job of the workflow cleanly. You can explain why it appeared, why it matches the kind of move you wanted to catch, and why it deserves at least one closer review step.

Stronger candidates also tend to look more substantial, not just technically triggered. The move feels clearer, separates itself from nearby names, still looks worth reviewing on a second look, and gives you less need to defend why it matters.

Useful notes are usually observable rather than fuzzy: the move was more decisive than nearby names, it survived a second look, it was not obviously thin or erratic, and the reason it surfaced matched the job of the workflow.

What a weak candidate usually looks like

Weak candidates often look active without looking meaningful. They may trigger for technical reasons, but they do not stand out clearly enough to justify attention over everything else around them.

They also tend to look borderline, choppy, thin, or quick to fade on a second look. If you have to invent the case for why it should count, the output probably did not stand on its own strongly enough.

A simple review rubric

If you want a practical review tool, score each surfaced candidate 0 or 1 on three questions: fit, quality, and next step.

- Fit: did it match the job of the workflow?

- Quality: did the move look meaningful enough to stand out?

- Next step: did it create an obvious review action?

- 3/3 usually means strong for this workflow, 2/3 means possible but still unclear somewhere, and 0 to 1/3 usually means noise for this setup.

A worked example

If a workflow is meant to surface stronger-than-normal movement, a stronger candidate is one you can explain quickly, that stands out from the surrounding names, and that clearly earns chart review or a comparable next step.

A weaker candidate may still have triggered, but it looks messy, borderline, or hard to distinguish from the surrounding clutter. A borderline candidate may technically fit, but still fail to separate itself enough to earn strong priority. The point is not that one would definitely become a trade. The point is that one did a better job of earning attention.

Judge whether it earned attention

A candidate does not need to prove the whole trade case immediately. It needs to justify the next review step clearly enough that you are not forcing the logic.

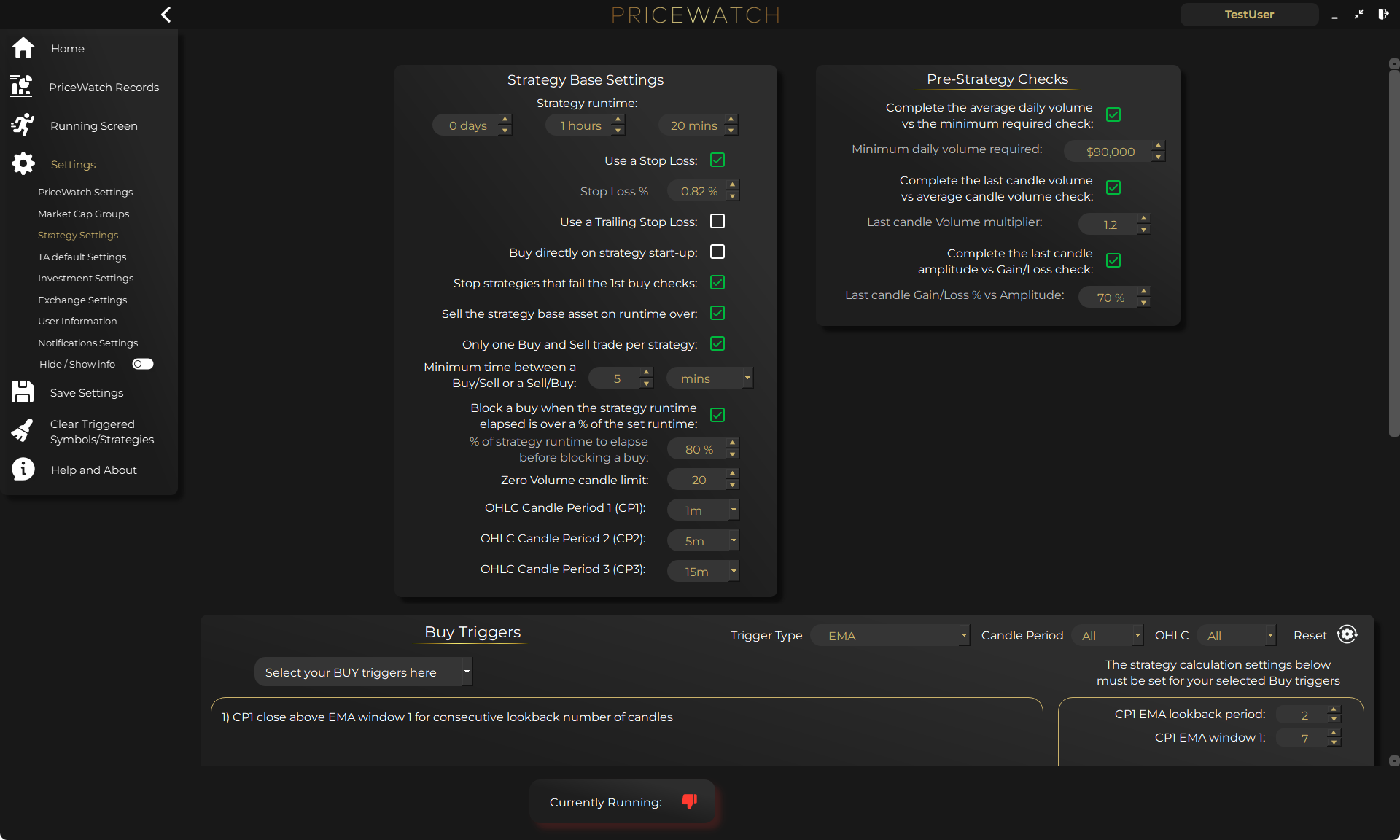

How to do this inside PriceWatch

PriceWatch gives you a more grounded review path than just reacting to a list of names.

- Use test mode while you are learning, so you can focus on review quality instead of money pressure.

- Use the Live Feed on the Running Screen to see what actually surfaced and what passed the checks you set.

- Write one short note on fit, quality, and next step while the context is still fresh.

- Note what you actually saw: clean move, messy move, quick fade, obvious standout, or background noise.

- Review later in the Records Screen with the relevant filters so you are comparing the right category of output.

- Look for repeated patterns, such as weak names caused by loose thresholds, weak supporting checks, too-broad market scope, or missing exclusions.

What this review should help you change

A good review process should tell you what kind of adjustment is probably needed next. If too many weak names are getting through, the workflow may be too loose. If good names are buried in clutter, your market scope may be too broad.

That is the real practical value here. You stop changing settings randomly. You start changing them for reasons.

Common review mistakes

The most common mistakes are judging every surfaced name by excitement, treating all activity as proof of quality, reviewing with no written standard, and changing the workflow too fast after mixed results.

Even one short written sentence per candidate makes future workflow changes calmer and more explainable.

A useful checklist

When PriceWatch surfaces something, ask whether it fits the job of the workflow, whether the move looks meaningful enough to deserve attention, whether you can explain why it stands out, whether it justifies a clear next step, and whether you would want more of this kind of candidate or less of it.

If most answers are weak or unclear, that is still valuable feedback. It tells you the workflow needs work.

Closing thought

Strong candidates usually feel clearer, easier to explain, and more worth the next step. Weak candidates usually feel noisier, harder to justify, or less useful once you slow down and inspect them properly.

That is really the skill this post is about. Not prediction, just better judgment about what deserves attention and what does not.

Want to test the workflow for yourself?

Start with PriceWatch in TEST mode

Start with PriceWatch in TEST mode and see how broader market discovery fits your process.

Not ready yet?

Get the next post by email

Join the newsletter for practical trading workflow ideas, product updates, and new blog posts.

Keep the route moving

Best next pages from here

If this article solved only part of the problem, use the closest route below to keep moving through discovery, alerting, trust, and comparison intent without bouncing back to search.

Discovery route

Crypto market scanner

Use the fixed-intent landing page if you want the clearest explanation of scan-first market discovery.

Alerting route

Desktop crypto price tracker

Go here if your main intent is Windows-based monitoring, price alerts, and local desktop workflow.

Trust route

Cloud bot vs local software

Use this when the next question is privacy, key control, and where the workflow actually runs.

Comparison route

PriceWatch vs TradingView

Use the comparison path if you are weighing chart-first analysis against discovery-first monitoring.

Who this is for (and who it is not for)

Good fit if

- You want a calmer, more useful way to judge whether surfaced candidates deserve more attention.

- You are trying to separate strong candidates from clutter before changing your workflow again.

- You want candidate review tied to what PriceWatch actually surfaces in practice.

Not a fit if

- You want every surfaced name to be treated like an immediate trade signal.

- You want excitement to replace written review standards.

- You want the post to tell you what to trade rather than how to review what surfaced.

FAQ

Is a strong candidate the same as a good trade?

No. A strong candidate is one that deserves further review. A later decision still depends on your broader rules and judgment.

What if most candidates feel unclear?

That usually means the workflow needs tightening, simplification, or a clearer review standard.

Should I write notes on every surfaced candidate?

Not necessarily at full length, but even one line on why something felt strong or weak can improve review consistency a lot.

Why use test mode for this?

Because it is the safest place to build the review habit. The point is to learn what deserves attention before real-funds consequences are involved.